Datasets

Existing datasets for text-centric image forensics often suffer from limited scale, lack of multilingual support, or absence of fine-grained reasoning annotations. To address these gaps and support the rigorous requirements of GenText-Forensics, we introduce RealText-V2—a large-scale, multi-dimensional benchmark purpose-built for text-centric image forensics.

RealText-V2 Dataset

RealText-V2 is the first large-scale benchmark dedicated to diverse text scenarios, ranging from sparse text in natural scenes to dense text in complex documents. By combining scale, scenario diversity, and rich semantic annotations, RealText-V2 serves as a comprehensive testbed for forgery analysis and adversarial generation tasks. Pioneering in both scale and annotation depth, it features:

20K+

Multi-modal samples with rich annotations

6

Languages spanning diverse script systems

6

Real-world domains

100+

Attack & forgery methods

3-Level

Multi-granularity forgery coverage

Triple

Detection + Grounding + Explanation

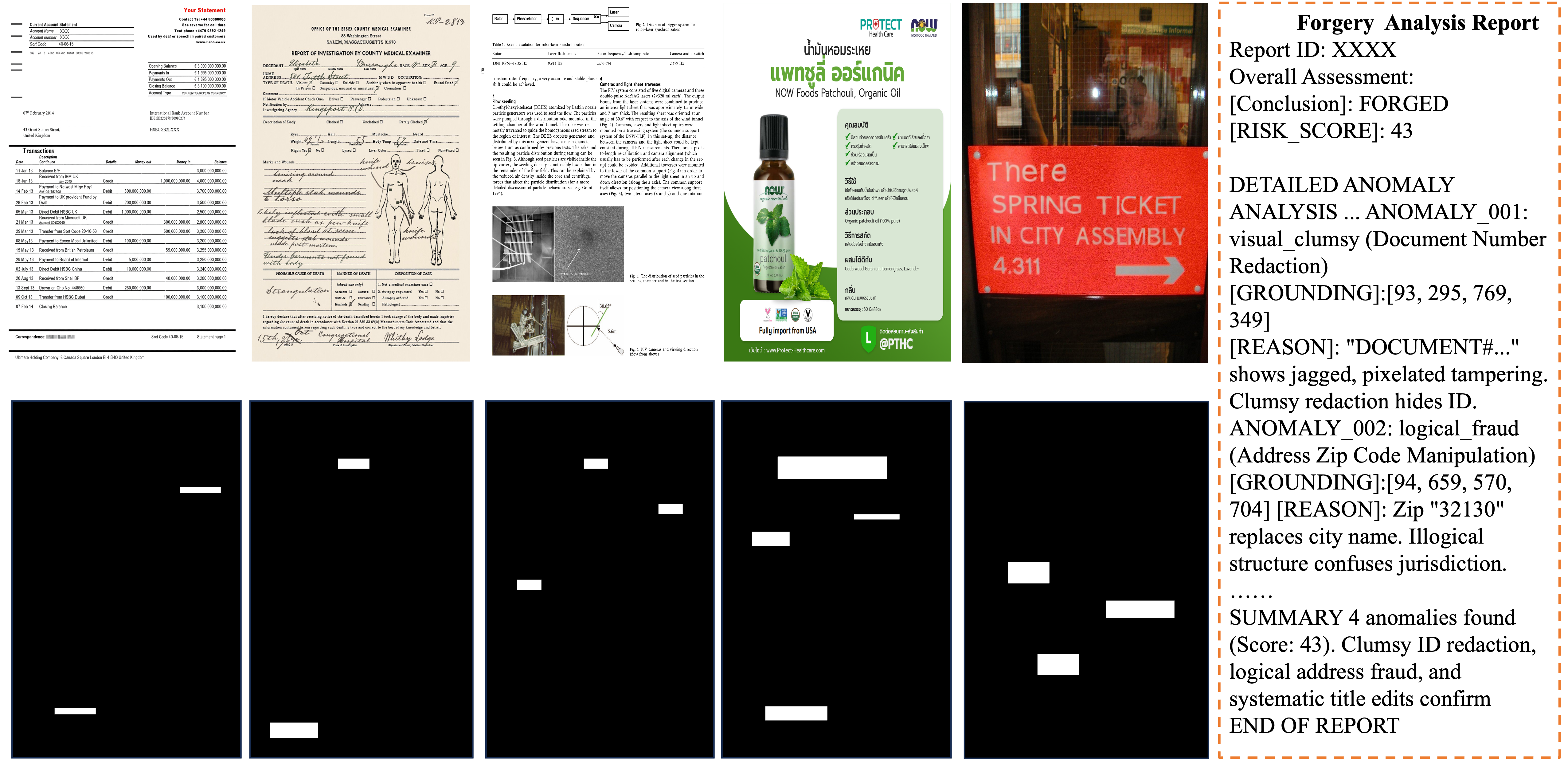

Dataset Samples

Figure: RealText-V2 dataset samples. Forged images (top) with corresponding pixel-level ground truth masks (bottom), spanning diverse languages, domains, and forgery granularities.

Languages & Domains

Multilingual Coverage

RealText-V2 spans 6 languages—English, Chinese, Arabic, Thai, Malay, and Indonesian—covering Latin, logographic, Arabic, and Thai script systems. Each writing system presents unique forensic challenges, from character-level substitution in Latin scripts to stroke-level tampering in Chinese characters.

Multi-Domain Scenarios

The dataset covers 6 critical domains: Finance, Healthcare, Education, Live Streaming, E-commerce, and Natural Scenes. This diversity—from dense structured documents (financial statements, medical records) to sparse scene text (street signs, product labels)—ensures models are tested across the full spectrum of real-world text-rich imagery.

Multi-Granularity Forgery

Forgery operations span three granularity levels: character-level (e.g., visually similar character substitution), word-level (e.g., content replacement with consistent typography), and semantic-level (e.g., logical contradictions, identity fraud). This hierarchy tests models' ability to detect both subtle visual artifacts and higher-level semantic inconsistencies.

Multi-Source Samples

The dataset incorporates both real-world tampered samples and AIGC-synthesized forgeries covering diverse generation pipelines. This dual-source design ensures forensic models generalize across traditional manipulation techniques and emerging AI-powered editing methods.

Pixel-Level Localization

Each forged sample is annotated with precise pixel-level ground truth masks that delineate the exact tampered regions. These masks are critical for training and evaluating spatial grounding models that must identify not just whether an image is forged, but where the manipulation occurred.

Expert-Level Explanations

Beyond binary labels and masks, RealText-V2 provides natural language explanations authored by domain experts. These explanations describe the specific visual artifacts (e.g., font inconsistency, noise discontinuity) and semantic contradictions (e.g., logical errors, identity fraud) that underpin each forgery judgment, enabling explainable forensic analysis.

Comparison with Existing Datasets

RealText-V2 is the first large-scale benchmark to support multilingual analysis with comprehensive annotations for detection (Det), grounding (Mask), and explanation (Expl).

| Dataset | Total | Text Line | Multi-Lang | Det | Mask | Expl |

|---|---|---|---|---|---|---|

| T-IC13 | 462 | |||||

| T-SROIE | 986 | |||||

| OSFT | 2,938 | |||||

| DocTamper | 170K | |||||

| RealText-V1 | 5,397 | |||||

| RealText-V2 (Ours) | 20K+ |

Annotation & Report Format

Each forged sample in RealText-V2 is annotated with pixel-level localization masks, tampering type labels, and expert-level natural language explanations. For the challenge, participants generate structured forensic analysis reports in the following format:

The document exhibits 2 distinct anomalies. The forgery pattern involves a mix of crude redactions and logical inconsistencies, providing substantial evidence of tampering.

Data Access

RealText-V2 dataset files and metadata are available on Hugging Face. Please check the Schedule page for data release dates.

Training Data

Includes forged and authentic images with pixel-level ground truth masks, tampering type labels, and expert-level natural language explanations for "Hard Samples." Designed for training explainable forensic agents.

Test Data

Used for final leaderboard ranking. Ground truth labels are withheld. Participants submit structured forensic reports (JSONL format) for automated evaluation via the LLM Judge pipeline.